Incomplete Features

When this image was assembled, these features were not yet completed. Therefore, only the Jira Cards included here are part of this release

Problem:

Certain Insights Advisor features differentiate between RHEL and OCP advisor

Goal:

Address top priority UI misalignments between RHEL and OCP advisor. Address UI features dropped from Insights ADvisor for OCP GA.

Scope:

Specific tasks and priority of them tracked in https://issues.redhat.com/browse/CCXDEV-7432

This contains all the Insights Advisor widget deliverables for the OCP release 4.11.

Scope

It covers only minor bug fixes and improvements:

- better error handling during internal outages in data processing

- add "last refresh" timestamp in the Advisor widget

Scenario: Check if the Insights Advisor widget in the OCP WebConsole UI shows the time of the last data analysis Given: OCP WebConsole UI and the cluster dashboard is accessible And: CCX external data pipeline is in a working state And: administrator A1 has access to his cluster's dashboard And: Insights Operator for this cluster is sending archives When: administrator A1 clicks on the Insights Advisor widget Then: the results of the last analysis are showed in the Insights Advisor widget And: the time of the last analysis is shown in the Insights Advisor widget

Acceptance criteria:

- The time of the last analysis is shown in the Insights Advisor widget for the scenario above

- The way it is presented is defined within the scope of https://issues.redhat.com/browse/CCXDEV-5869 (mockup task)

- The source of this timestamp must be a result of running the Prometheus metric (last archive upload time):

max_over_time(timestamp(changes(insightsclient_request_send_total\{status_code="202"}[1m]) > 0)[24h:1m])

Show the error message (mocked in CCXDEV-5868) if the Prometheus metrics `cluster_operator_conditions{name="insights"}` contain two true conditions: UploadDegraded and Degraded at the same time. This state occurs if there was an IO archive upload error = problems with the pipeline.

Expected for 4.11 OCP release.

Epic Goal

- Allow admin user to create new alerting rules, targeting metrics in any namespace

- Allow cloning of existing rules to simplify rule creation

- Allow creation of silences for existing alert rules

Why is this important?

- Currently, any platform-related metrics (exposed in a openshift-, kube- and default namespace) cannot be used to form a new alerting rule. That makes it very difficult for administrators to enrich our out of the box experience for the OpenShift Container Platform with new rules that may be specific to their environments.

- Additionally, we had requests from customer to allow modifications of our existing, out of the box alerting rules (for instance tweaking the alert expression or changing the severity label). Unfortunately, that is not easy since most rules come from several open source projects, or other OpenShift components, and any modifications would make a seamless upgrade not really seamless anymore. Imagine K8s changes metrics again (see 1.14) and we have to update our rules. We would not know what modifications have been done (even just the threshold might be difficult if upstream changes that as well) and we would not be able to upgrade these rules.

Scenarios

- I'd like to modify the query expression of an existing rule (because the threshold value doesn't match with my environment).

Cloning the existing rule should end up with a new rule in the same namespace.

Modifications can now be done to the new rule.

(Optional) You can silence the existing rule.

- I'd like to create a new rule based on a metric only available to an openshift-* namespace

Create a new PrometheusRule object inside the namespace that includes the metrics you need to form the alerting rule.

- I'd like to update the label of an existing rule.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- Ability to distinguish between rules deployed by us (CMO) and user created rules

Dependencies (internal and external)

Previous Work (Optional):

Open questions::

- Distinguish between operator-created rules and user-created rules

Currently no such mechanism exists. This will need to be added to prometheus-operator or cluster-monitoring-operator.

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

CMO should reconcile the platform Prometheus configuration with the alert-relabel-config resources.

DoD

- Alerts changed via alert-relabel-configs are evaluated by the Platform monitoring stack.

- Product alerts which are overriden aren't sent to Alertmanager

CMO should reconcile the platform Prometheus configuration with the AlertingRule resources.

DoD

- Alerts added via AlertingRule resources are evaluated by the Platform monitoring stack.

Managing PVs at scale for a fleet creates difficulties where "one size does not fit all". The ability for SRE to deploy prometheus with PVs and have retention based an on a desired size would enable easier management of these volumes across the fleet.

The prometheus-operator exposes retentionSize.

| Field | Description |

|---|---|

| retentionSize | Maximum amount of disk space used by blocks. Supported units: B, KB, MB, GB, TB, PB, EB. Ex: 512MB. |

This is a feature request to enable this configuration option via CMO cluster-monitoring-config ConfigMap.

Epic Goal

- Cluster admins want to configure the retention size for their metrics.

Why is this important?

- While it is possible to define how long metrics should be retained on disk, it's not possible to tell the cluster monitoring operator how much data it should keep. For OSD/ROSA in particular, it would facilitate the management of the fleet if the retention size could be configured based on the persistent volume size because it would avoid issues with the storage getting full and monitoring being down when too many metrics are produced.

Scenarios

- As a cluster admin, I want to define the maximum amount of data to be retained on the persistent volume.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- The cluster-monitoring-config config and the user-workload-monitoring-config configmap allow to configure the retention size for

- Prometheus (Platform and UWM)

- Thanos Ruler (to be confirmed)

- Proper validation is in place preventing bad user inputs from breaking the stack.

Dependencies (internal and external)

- Thanos ruler doesn't support retention size (only retention time).

Previous Work (Optional):

- None

Open questions::

- None

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Problem Alignment

The Problem

Today, all configuration for setting individual, for example, routing configuration is done via a single configuration file that only admins have access to. If an environment uses multiple tenants and each tenant, for example, has different systems that they are using to notify teams in case of an issue, then someone needs to file a request w/ an admin to add the required settings.

That can be bothersome for individual teams, since requests like that usually disappear in the backlog of an administrator. At the same time, administrators might get tons of requests that they have to look at and prioritize, which takes them away from more crucial work.

We would like to introduce a more self service approach whereas individual teams can create their own configuration for their needs w/o the administrators involvement.

Last but not least, since Monitoring is deployed as a Core service of OpenShift there are multiple restrictions that the SRE team has to apply to all OSD and ROSA clusters. One restriction is the ability for customers to use the central Alertmanager that is owned and managed by the SRE team. They can't give access to the central managed secret due to security concerns so that users can add their own routing information.

High-Level Approach

Provide a new API (based on the Operator CRD approach) as part of the Prometheus Operator that allows creating a subset of the Alertmanager configuration without touching the central Alertmanager configuration file.

Please note that we do not plan to support additional individual webhooks with this work. Customers will need to deploy their own version of the third party webhooks.

Goal & Success

- Allow users to deploy individual configurations that allow setting up Alertmanager for their needs without an administrator.

Solution Alignment

Key Capabilities

- As an OpenShift administrator, I want to control who can CRUD individual configuration so that I can make sure that any unknown third person can touch the central Alertmanager instance shipped within OpenShift Monitoring.

- As a team owner, I want to deploy a routing configuration to push notifications for alerts to my system of choice.

Key Flows

Team A wants to send all their important notifications to a specific Slack channel.

- Administrator gives permission to Team A to allow creating a new configuration CR in their individual namespace.

- Team A creates a new configuration CR.

- Team A configures what alerts should go into their Slack channel.

- Open Questions & Key Decisions (optional)

- Do we want to improve anything inside the developer console to allow configuration?

Epic Goal

- Allow users to manage Alertmanager for user-defined alerts and have the feature being fully supported.

Why is this important?

- Users want to configure alert notifications without admin intervention.

- The feature is currently Tech Preview, it should be generally available to benefit a bigger audience.

Scenarios

- As a cluster admin, I can deploy an Alertmanager service dedicated for user-defined alerts (e.g. separated from the existing Alertmanager already used for platform alerts).

- As an application developer, I can silence alerts from the OCP console.

- As an application developer, I'm not allowed to configure invalid AlertmanagerConfig objects.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- The AlertmanagerConfig CRD is v1beta1

- The validating webhook service checking AlertmanagerConfig resources is highly-available.

Dependencies (internal and external)

- Prometheus operator upstream should migrate the AlertmanagerConfig CRD from v1alpha1 to v1beta1

- Console enhancements likely to be involved (see below).

Previous Work (Optional):

- Part of the feature is available as Tech Preview (

MON-880).

Open questions:

- Coordination with the console team to support the Alertmanager service dedicated for user-defined alerts.

- Migration steps for users that are already using the v1alpha1 CRD.

Done Checklist

* CI - CI is running, tests are automated and merged.

* Release Enablement <link to Feature Enablement Presentation>

* DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

* DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

* DEV - Downstream build attached to advisory: <link to errata>

* QE - Test plans in Polarion: <link or reference to Polarion>

* QE - Automated tests merged: <link or reference to automated tests>

* DOC - Downstream documentation merged: <link to meaningful PR>

Now that upstream supports AlertmanagerConfig v1beta1 (see MON-2290 and https://github.com/prometheus-operator/prometheus-operator/pull/4709), it should be deployed by CMO.

DoD:

- Kubernetes API exposes and supports the v1beta1 version for AlertmanagerConfig CRD (in addition to v1alpha1).

- Users can manage AlertmanagerConfig v1beta1 objects seamlessly.

- AlertmanagerConfig v1beta1 objects are reconciled in the generated Alertmanager configuration.

As described in https://github.com/openshift/enhancements/blob/ba3dc219eecc7799f8216e1d0234fd846522e88f/enhancements/monitoring/multi-tenant-alerting.md#distinction-between-platform-and-user-alerts, cluster admins want to distinguish platform alerts from user alerts. For this purpose, CMO should provision an external label (openshift_io_alert_source="platform") on prometheus-k8s instances.

Epic Goal

- The goal is to support metrics federation for user-defined monitoring via the /federate Prometheus endpoint (both from within and outside of the cluster).

Why is this important?

- It is already possible to configure remote write for user-defined monitoring to push metrics outside of the cluster but in some cases, the network flow can only go from the outside to the cluster and not the opposite. This makes it impossible to leverage remote write.

- It is already possible to use the /federate endpoint for the platform Prometheus (via the internal service or via the OpenShift route) so not supporting for UWM doesn't provide a consistent experience.

- If we don't expose the /federate endpoint for the UWM Prometheus, users would have no supported way to store and query application metrics from a central location.

Scenarios

- As a cluster admin, I want to federate user-defined metrics using the Prometheus /federate endpoint.

- As a cluster admin, I want that the /federate endpoint to UWM is accessible via an OpenShift route.

- As a cluster admin, I want that the access to the /federate endpoint to UWM requires authentication (with bearer token only) & authorization (the required permissions should match the permissions on the /federate endpoint of the Platform Prometheus).

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- Documentation - information about the recommendations and limitations/caveats of the federation approach.

- User can federate user-defined metrics from within the cluster

- User can federate user-defined metrics from the outside via the OpenShift route.

Dependencies (internal and external)

- None

Previous Work (Optional):

- None

Open questions:

- None

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

DoD

- User can federate UWM metrics from outside of the cluster via the OpenShift route.

- E2E test added to the CMO test suite.

DoD

- User can federate UWM metrics within the cluster from the prometheus-user-workload.openshift-user-workload-monitoring.svc:9092 service

- The service requires authentication via bearer token and authorization (same permissions as for federating platform metrics)

Copy/paste from [_https://github.com/openshift-cs/managed-openshift/issues/60_]

Which service is this feature request for?

OpenShift Dedicated and Red Hat OpenShift Service on AWS

What are you trying to do?

Allow ROSA/OSD to integrate with AWS Managed Prometheus.

Describe the solution you'd like

Remote-write of metrics is supported in OpenShift but it does not work with AWS Managed Prometheus since AWS Managed Prometheus requires AWS SigV4 auth.

- Note that Prometheus supports AWS SigV4 since v2.26 and OpenShift 4.9 uses v2.29.

Describe alternatives you've considered

There is the workaround to use the "AWS SigV4 Proxy" but I'd think this is not properly supported by RH.

https://mobb.ninja/docs/rosa/cluster-metrics-to-aws-prometheus/

Additional context

The customer wants to use an open and portable solution to centralize metrics storage and analysis. If they also deploy to other clouds, they don't want to have to re-configure. Since most clouds offer a Prometheus service (or it's easy to self-manage Prometheus), app migration should be simplified.

Epic Goal

The cluster monitoring operator should allow OpenShift customers to configure remote write with all authentication methods supported by upstream Prometheus.

We will extend CMO's configuration API to support the following authentications with remote write:

- Sigv4

- Authorization

- OAuth2

Why is this important?

Customers want to send metrics to AWS Managed Prometheus that require sigv4 authentication (see https://docs.aws.amazon.com/prometheus/latest/userguide/AMP-secure-metric-ingestion.html#AMP-secure-auth).

Scenarios

- As a cluster admin, I want to forward platform/user metrics to remote write systems requiring Sigv4 authentication.

- As a cluster admin, I want to forward platform/user metrics to remote write systems requiring OAuth2 authentication.

- As a cluster admin, I want to forward platform/user metrics to remote write systems requiring custom Authorization header for authentication (e.g. API key).

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- It is possible for a cluster admin to configure any authentication method that is supported by Prometheus upstream for remote write (both platform and user-defined metrics):

-

- Sigv4

- Authorization

- OAuth2

Dependencies (internal and external)

- In theory none because everything is already supported by the Prometheus operator upstream. We may discover bugs in the upstream implementation though that may require upstream involvement.

Previous Work

- After CMO started exposing the RemoteWrite specification in

MON-1069, additional authentication options where added to prometheus and prometheus-operator but CMO didn't catch up on these.

Open Questions

- None

Prometheus and Prometheus operator already support sigv4 authentication for remote write. This should be possible to configure the same in the CMO configuration:

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://remote-write.endpoint"

sigv4:

accessKey:

name: aws-credentialss

key: access

secretKey:

name: aws-credentials

key: secret

profile: "SomeProfile"

roleArn: "SomeRoleArn"

DoD:

- Ability to configure sigv4 authentication for remote write in the openshift-monitoring/cluster-monitoring-config configmap

- Ability to configure sigv4 authentication for remote write in the openshift-user-workload-monitoring/user-workload-monitoring-config configmap

Prometheus and Prometheus operator already support custom Authorization for remote write. This should be possible to configure the same in the CMO configuration:

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

prometheusK8s:

remoteWrite:

- url: "https://remote-write.endpoint"

Authorization:

type: Bearer

credentials:

name: credentials

key: token

DoD:

- Ability to configure custom Authorization for remote write in the openshift-monitoring/cluster-monitoring-config configmap

- Ability to configure custom Authorization for remote write in the openshift-user-workload-monitoring/user-workload-monitoring-config configmap

Description

As WMCO user, I want to make sure containerd logging information has been updated in documents and scripts.

Acceptance Criteria

- update must-gather to collect containerd logs

- Internal/Customer Documents and log collecting scripts must have containerd specific information (ex: location of logs).

Feature Overview

We drive OpenShift cross-market customer success and new customer adoption with constant improvements and feature additions to the existing capabilities of our OpenShift Core Networking (SDN and Network Edge). This feature captures that natural progression of the product.

Goals

- Feature enhancements (performance, scale, configuration, UX, ...)

- Modernization (incorporation and productization of new technologies)

Requirements

- Core Networking Stability

- Core Networking Performance and Scale

- Core Neworking Extensibility (Multus CNIs)

- Core Networking UX (Observability)

- Core Networking Security and Compliance

In Scope

- Network Edge (ingress, DNS, LB)

- SDN (CNI plugins, openshift-sdn, OVN, network policy, egressIP, egress Router, ...)

- Networking Observability

Out of Scope

There are definitely grey areas, but in general:

- CNV

- Service Mesh

- CNF

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

Create a PR in openshift/cluster-ingress-operator to implement configurable router probe timeouts.

The PR should include the following:

- Changes to the ingress operator's ingress controller to allow the user to configure the readiness and liveness probe's timeoutSeconds values.

- Changes to existing unit tests to verify that the new functionality works properly.

- Write E2E test to verify that the new functionality works properly.

User Story: As a customer in a highly regulated environment, I need the ability to secure DNS traffic when forwarding requests to upstream resolvers so that I can ensure additional DNS traffic and data privacy.

tldr: three basic claims, the rest is explanation and one example

- We cannot improve long term maintainability solely by fixing bugs.

- Teams should be asked to produce designs for improving maintainability/debugability.

- Specific maintenance items (or investigation of maintenance items), should be placed into planning as peer to PM requests and explicitly prioritized against them.

While bugs are an important metric, fixing bugs is different than investing in maintainability and debugability. Investing in fixing bugs will help alleviate immediate problems, but doesn't improve the ability to address future problems. You (may) get a code base with fewer bugs, but when you add a new feature, it will still be hard to debug problems and interactions. This pushes a code base towards stagnation where it gets harder and harder to add features.

One alternative is to ask teams to produce ideas for how they would improve future maintainability and debugability instead of focusing on immediate bugs. This would produce designs that make problem determination, bug resolution, and future feature additions faster over time.

I have a concrete example of one such outcome of focusing on bugs vs quality. We have resolved many bugs about communication failures with ingress by finding problems with point-to-point network communication. We have fixed the individual bugs, but have not improved the code for future debugging. In so doing, we chase many hard to diagnose problem across the stack. The alternative is to create a point-to-point network connectivity capability. this would immediately improve bug resolution and stability (detection) for kuryr, ovs, legacy sdn, network-edge, kube-apiserver, openshift-apiserver, authentication, and console. Bug fixing does not produce the same impact.

We need more investment in our future selves. Saying, "teams should reserve this" doesn't seem to be universally effective. Perhaps an approach that directly asks for designs and impacts and then follows up by placing the items directly in planning and prioritizing against PM feature requests would give teams the confidence to invest in these areas and give broad exposure to systemic problems.

Relevant links:

- Documentation:

- Edge Diagnostics Scratchpad, our team's internal diagnostic guide.

- Troubleshooting OCP networking issues - The complete guide, the SDN team's diagnostic guide.

- Linux Performance, Brendan Gregg's guide to analyzing Linux performance issues.

- RFC: A proper feedback loop on Alerts.

- OpenShift Router Reload Technical Overview on Access.

- Performance Scaling HAProxy with OpenShift on Access.

- How to collect worker metrics to troubleshoot CPU load, memory pressure and interrupt issues and networking on worker nodes in OCP 4 on Access.

- OpenShift Performance and Scale Knowledge Base on Mojo, results from OpenShift scalability testing.

- Scalability and performance, OCP 4.5 documentation about the router's currently known scalability limits.

- Scaling OpenShift Container Platform HAProxy Router, OCP 3.11 documentation about the manual performance configuration that was possible in OCP 3.

- Timing web requests with cURL and Chrome from the Cloudflare blog.

- tcpdump advanced filters, some useful tcpdump commands.

- OpenShift SDN - Networking, OCP 3.11 documentation on the SDN (useful background reading).

- Ingress Operator and Controller Status Conditions, design document for improved status condition reporting.

- Observability tips for HAProxy, a slide deck by Willy Tarreau.

- Interesting Traces - Out of Order versus Retransmissions, analysis using tshark.

- The PCP Book: A Complete Documentation of Performance Co-Pilot, by Yogesh Babar.

- Debugging kernel networking bug, brief guide to using SystemTap on RHCOS.

- Troubleshooting throughput issues from the OCP 4.5 documentation.

- Troubleshooting OpenShift Clusters and Workloads.

- Red Hat Enterprise Linux Network Performance Tuning Guide (PDF).

- openshift/enhancements#289 stability: point to point network check, a diagnostic built into the kube-apiserver operator.

- Diagnostic tools:

- dropwatch to watch for packet drops.

- ethtool to check NIC configuration.

- iovisor/bcc: BCC - Tools for BPF-based Linux IO analysis, networking, monitoring, and more to trace and diagnose various issues in the networking stack.

- r-curler to gather timing information about HTTP/HTTPS connections.

- route-monitor, to monitor routes for reachability.

- hping(3), a programmable packet generator.

- OpenTracing / Jaeger in OpenShift.

- node-problem-detector, a possible integration point for new diagnostics.

- Using SystemTap by Brendan Gregg.

- DTrace SystemTap cheatsheet (PDF).

- Visualization and more sophisticated diagnostic tools:

- eldadru/ksniff, kubectl plugin for tcpdump & Wireshark.

- ironcladlou/ditm, Dan's "Dan in the Middle" tool.

- Skydive, network diagnostic and visualization tool.

- ali, a "load testing tool capable of performing real-time analysis" with visualization.

- Testing tools:

- Case studies:

- BZ1763206 is an example of diagnosing DNS latency/timeouts.

- BZ1829779 Investigation details the diagnosis of route latency.

- BZ1845545 is an example of diagnosing misconfigured DNS for an external LB.

- Debugging network stalls on Kubernetes, from the GitHub Blog, about diagnosing Kubernetes performance issues related to ksoftirqd.

Per the 4.6.30 Monitoring DNS Post Mortem, we should add E2E tests to openshift/cluster-dns-operator to reduce the risk that changes to our CoreDNS configuration break DNS resolution for clients.

To begin with, we add E2E DNS testing for 2 or 3 client libraries to establish a framework for testing DNS resolvers; the work of adding additional client libraries to this framework can be left for follow-up stories. Two common libraries are Go's resolver and glibc's resolver. A somewhat common library that is known to have quirks is musl libc's resolver, which uses a shorter timeout value than glibc's resolver and reportedly has issues with the EDNS0 protocol extension. It would also make sense to test Java or other popular languages or runtimes that have their own resolvers.

Additionally, as talked about in our DNS Issue Retro & Testing Coverage meeting on Feb 28th 2024, we also decided to add a test for testing a non-EDNS0 query for a larger than 512 byte record, as once was an issue in bug OCPBUGS-27397.

The ultimate goal is that the test will inform us when a change to OpenShift's DNS or networking has an effect that may impact end-user applications.

In OCP 4.8 the router was changed to use the "random" balancing algorithm for non-passthrough routes by default. It was previously "leastconn".

Bug https://bugzilla.redhat.com/show_bug.cgi?id=2007581 shows that using "random" by default incurs significant memory overhead for each backend that uses it.

PR https://github.com/openshift/cluster-ingress-operator/pull/663

reverted the change and made "leastconn" the default again (OCP 4.8 onwards).

The analysis in https://bugzilla.redhat.com/show_bug.cgi?id=2007581#c40 shows that the default haproxy behaviour is to multiply the weight (specified in the route CR) by 16 as it builds its data structures for each backend. If no weight is specified then openshift-router sets the weight to 256. If you have many, many thousands of routes then this balloons quickly and leads to a significant increase in memory usage, as highlighted by customer cases attached to BZ#2007581.

The purpose of this issue is to both explore changing the openshift-router default weight (i.e., 256) to something smaller, or indeed unset (assuming no explicit weight has been requested), and to measure the memory usage within the context of the existing perf&scale tests that we use for vetting new haproxy releases.

It may be that the low-hanging change is to not default to weight=256 for backends that only have one pod replica (i.e., if no value specified, and there is only 1 pod replica, then don't default to 256 for that single server entry).

Outcome: does changing the [default] weight value make it feasible to switch back to "random" as the default balancing algorithm for a future OCP release.

Revert router to using "random" once again in 4.11 once analysis is done on impact of weight and static memory allocation.

Feature Overview

Plugin teams need a mechanism to extend the OCP console that is decoupled enough so they can deliver at the cadence of their projects and not be forced in to the OCP Console release timelines.

The OCP Console Dynamic Plugin Framework will enable all our plugin teams to do the following:

- Extend the Console

- Deliver UI code with their Operator

- Work in their own git Repo

- Deliver at their own cadence

Goals

-

- Operators can deliver console plugins separate from the console image and update plugins when the operator updates.

- The dynamic plugin API is similar to the static plugin API to ease migration.

- Plugins can use shared console components such as list and details page components.

- Shared components from core will be part of a well-defined plugin API.

- Plugins can use Patternfly 4 components.

- Cluster admins control what plugins are enabled.

- Misbehaving plugins should not break console.

- Existing static plugins are not affected and will continue to work as expected.

Out of Scope

-

- Initially we don't plan to make this a public API. The target use is for Red Hat operators. We might reevaluate later when dynamic plugins are more mature.

- We can't avoid breaking changes in console dependencies such as Patternfly even if we don't break the console plugin API itself. We'll need a way for plugins to declare compatibility.

- Plugins won't be sandboxed. They will have full JavaScript access to the DOM and network. Plugins won't be enabled by default, however. A cluster admin will need to enable the plugin.

- This proposal does not cover allowing plugins to contribute backend console endpoints.

Requirements

| Requirement | Notes | isMvp? |

|---|---|---|

| UI to enable and disable plugins | YES | |

| Dynamic Plugin Framework in place | YES | |

| Testing Infra up and running | YES | |

| Docs and read me for creating and testing Plugins | YES | |

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

Currently, webpack tree shakes PatternFly and only includes the components used by console in its vendor bundle. We need to expose all of the core PatternFly components for use in dynamic plugin, which means we have to disable tree shaking for PatternFly. We should expose this as a separate bundle. This will allow browsers to cache more efficiently and only need to load the PF bundle again when we upgrade PatternFly.

Open Questions

What parts of PatternFly do we consider core?

Acceptance Criteria

- All PatternFly core components are exposed to dynamic plugins

- PatternFly is exposed as a separate bundle that is not part of the main vendor bundle

Feature Overview

- This Section:* High-Level description of the feature ie: Executive Summary

- Note: A Feature is a capability or a well defined set of functionality that delivers business value. Features can include additions or changes to existing functionality. Features can easily span multiple teams, and multiple releases.

Goals

- This Section:* Provide high-level goal statement, providing user context and expected user outcome(s) for this feature

- …

Requirements

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

(Optional) Use Cases

This Section:

- Main success scenarios - high-level user stories

- Alternate flow/scenarios - high-level user stories

- ...

Questions to answer…

- ...

Out of Scope

- …

Background, and strategic fit

This Section: What does the person writing code, testing, documenting need to know? What context can be provided to frame this feature.

Assumptions

- ...

Customer Considerations

- ...

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

As a user, I want the ability to run a pod in debug mode.

This should be the equivalent of running: oc debug pod

Acceptance Criteria for MVP

- Build off of the crash-loop back off popover from https://github.com/openshift/console/pull/7302 to include a description of what crash-loop back off is, a link to view logs, a link to view events and a link to debug (container-name) in terminal. If more than one container is crash-looping list them individually.

- Create a debug container page that includes breadcrumbs as well as the terminal to debug. Add an informational alert at the top to make it clear that this is a temporary Pod and closing this page will delete the temporary pod.

- Add debug in terminal as an action to the logs tool bar. Only enable the action when the crash-loop back off status occurs for the selected container. Add a tool tip to explain when the action is disabled.

Assets

Designs (WIP): https://docs.google.com/document/d/1b2n9Ox4xDNJ6AkVsQkXc5HyG8DXJIzU8tF6IsJCiowo/edit#

When viewing the Installed Operators list set to 'All projects' and then selecting an operator that is available in 'All namespaces' (globally installed,) upon clicking the operator to view its details the user is taken into the details of that operator in installed namespace (project selector will switch to the install namespace.)

This can be disorienting then to look at the lists of custom resource instances and see them all blank, since the lists are showing instances only in the currently selected project (the install namespace) and not across all namespaces the operator is available in.

It is likely that making use of the new Operator resource will improve this experience (CONSOLE-2240,) though that may still be some releases away. it should be considered if it's worth a "short term" fix in the meantime.

Note: The informational alert was not implemented. It was decided that since "All namespaces" is displayed in the radio button, the alert was not needed.

During master nodes upgrade when nodes are getting drained there's currently no protection from two or more operands going down. If your component is required to be available during upgrade or other voluntary disruptions, please consider deploying PDB to protect your operands.

The effort is tracked in https://issues.redhat.com/browse/WRKLDS-293.

Example:

Acceptance Criteria:

1. Create PDB controller in console-operator for both console and downloads pods

2. Add e2e tests for PDB in single node and multi node cluster

Note: We should consider to backport this to 4.10

Goal

Add support for PDB (Pod Disruption Budget) to the console.

Requirements:

- Add a list, detail, and yaml view (with samples) for PDBs. In addition, update the workloads page to support PDBs as well.

- For the PBD list page include a table with name, namespace, selector, availability, allowed disruptions and created. In addition, to the table provide the main call to action to create a PDB.

- For the PDB details page provide a Details, YAML and Pods tab. The Pods tab will include a list pods associated with the PBD - make sure to surface the owner column.

- When users create a PDB from the list page, take them to the YAML and provide samples to enhance the creation experience. Sample 1: Set max unavailable to 0, Sample 2: Set min unavailable to 25% (confirming samples with stakeholders). In the case that a PDB has already been applied, warn users that it is not recommended to add another. Cover use cases as well that keep users from creating poor policies - for example, setting the minimum available to zero.

- Add the ability to add/edit/view PBDs on a workload. If we edit a PDB applied to multiple workloads, warn users that this change will affect all workloads and not only the one they are currently editing. When a PDB has been applied, add a new filed to the details page with a link to the PDB and policy.

Designs:

- Exploratory designs (by Chris): https://www.sketch.com/s/a2668252-07fe-4472-a96f-d3bf94423959/p/AF1CAEE1-DB56-40B7-ADE8-970EA4D1F9

- Final designs (by Thi): Marvel | Doc

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

<--- Cut-n-Paste the entire contents of this description into your new Epic --->

Epic Goal

- Rebase OpenShift components to k8s v1.24

Why is this important?

- Rebasing ensures components work with the upcoming release of Kubernetes

- Address tech debt related to upstream deprecations and removals.

Scenarios

- ...

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Dependencies (internal and external)

- k8s 1.24 release

Previous Work (Optional):

- …

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Rebase openshift/builder to k8s 1.24

Feature Overview

- As an infrastructure owner, I want a repeatable method to quickly deploy the initial OpenShift cluster.

- As an infrastructure owner, I want to install the first (management, hub, “cluster 0”) cluster to manage other (standalone, hub, spoke, hub of hubs) clusters.

Goals

- Enable customers and partners to successfully deploy a single “first” cluster in disconnected, on-premises settings

Requirements

4.11 MVP Requirements

- Customers and partners needs to be able to download the installer

- Enable customers and partners to deploy a single “first” cluster (cluster 0) using single node, compact, or highly available topologies in disconnected, on-premises settings

- Installer must support advanced network settings such as static IP assignments, VLANs and NIC bonding for on-premises metal use cases, as well as DHCP and PXE provisioning environments.

- Installer needs to support automation, including integration with third-party deployment tools, as well as user-driven deployments.

- In the MVP automation has higher priority than interactive, user-driven deployments.

- For bare metal deployments, we cannot assume that users will provide us the credentials to manage hosts via their BMCs.

- Installer should prioritize support for platforms None, baremetal, and VMware.

- The installer will focus on a single version of OpenShift, and a different build artifact will be produced for each different version.

- The installer must not depend on a connected registry; however, the installer can optionally use a previously mirrored registry within the disconnected environment.

Use Cases

- As a Telco partner engineer (Site Engineer, Specialist, Field Engineer), I want to deploy an OpenShift cluster in production with limited or no additional hardware and don’t intend to deploy more OpenShift clusters [Isolated edge experience].

- As a Enterprise infrastructure owner, I want to manage the lifecycle of multiple clusters in 1 or more sites by first installing the first (management, hub, “cluster 0”) cluster to manage other (standalone, hub, spoke, hub of hubs) clusters [Cluster before your cluster].

- As a Partner, I want to package OpenShift for large scale and/or distributed topology with my own software and/or hardware solution.

- As a large enterprise customer or Service Provider, I want to install a “HyperShift Tugboat” OpenShift cluster in order to offer a hosted OpenShift control plane at scale to my consumers (DevOps Engineers, tenants) that allows for fleet-level provisioning for low CAPEX and OPEX, much like AKS or GKE [Hypershift].

- As a new, novice to intermediate user (Enterprise Admin/Consumer, Telco Partner integrator, RH Solution Architect), I want to quickly deploy a small OpenShift cluster for Poc/Demo/Research purposes.

Questions to answer…

Out of Scope

Out of scope use cases (that are part of the Kubeframe/factory project):

- As a Partner (OEMs, ISVs), I want to install and pre-configure OpenShift with my hardware/software in my disconnected factory, while allowing further (minimal) reconfiguration of a subset of capabilities later at a different site by different set of users (end customer) [Embedded OpenShift].

- As an Infrastructure Admin at an Enterprise customer with multiple remote sites, I want to pre-provision OpenShift centrally prior to shipping and activating the clusters in remote sites.

Background, and strategic fit

- This Section: What does the person writing code, testing, documenting need to know? What context can be provided to frame this feature.

Assumptions

- The user has only access to the target nodes that will form the cluster and will boot them with the image presented locally via a USB stick. This scenario is common in sites with restricted access such as government infra where only users with security clearance can interact with the installation, where software is allowed to enter in the premises (in a USB, DVD, SD card, etc.) but never allowed to come back out. Users can't enter supporting devices such as laptops or phones.

- The user has access to the target nodes remotely to their BMCs (e.g. iDrac, iLo) and can map an image as virtual media from their computer. This scenario is common in data centers where the customer provides network access to the BMCs of the target nodes.

- We cannot assume that we will have access to a computer to run an installer or installer helper software.

Customer Considerations

- ...

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

References

Epic Goal

- As an OpenShift infrastructure owner, I need to be able to integrate the installation of my first on-premises OpenShift cluster with my automation flows and tools.

- As an OpenShift infrastructure owner, I must be able to provide the CLI tool with manifests that contain the definition of the cluster I want to deploy

- As an OpenShift Infrastructure owner, I must be able to get the validation errors in a programmatic way

- As an OpenShift Infrastructure owner, I must be able to get the events and progress of the installation in a programmatic way

- As an OpenShift Infrastructure owner, I must be able to retrieve the kubeconfig and OpenShift Console URL in a programmatic way

Why is this important?

- When deploying clusters with a large number of hosts and when deploying many clusters, it is common to require to automate the installations.

- Customers and partners usually use third party tools of their own to orchestrate the installation.

- For Telco RAN deployments, Telco partners need to repeatably deploy multiple OpenShift clusters in parallel to multiple sites at-scale, with no human intervention.

Scenarios

- Monitoring flow:

- I generate all the manifests for the cluster,

- call the CLI tool pointint to the manifests path,

- Obtain the installation image from the nodes

- Use my infrastructure capabilities to boot the image on the target nodes

- Use the tool to connect to assisted service to get validation status and events

- Use the tool to retrieve credentials and URL for the deployed cluster

Acceptance Criteria

- Backward compatibility between OCP releases with automation manifests (they can be applied to a newer version of OCP).

- Installation progress and events can be tracked programatically

- Validation errors can be obtained programatically

- Kubeconfig and console URL can be obtained programatically

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

References

User Story:

As a deployer, I want to be able to:

- Get the credentials for the cluster that is going to be deployed

so that I can achieve

- Checking the installed cluster for installation completion

- Connect and administer the cluster that gets installed

Currently the Assisted Service generates the credentials by running the ignition generation step of the oepnshift-installer. This is why the credentials are only retrievable from the REST API towards the end of the installation.

In the BILLI usage, which takes down assisted service before the installation is complete there is no obvious point at which to alert the user that they should retrieve the credentials. This means that we either need to:

- Allow the user to pass the admin key that will then get signed by the generated CA and replace the key that is made by openshift-installer (would mean new functionality in AI)

- Allow the key to be retrieved by SSH with the fleeting command from the node0 (after it has generated). The command should be able to wait until it is possible

- Have the possibility to POST it somewhere

Acceptance Criteria:

- The admin key is generated and usable to check for installation completeness

- (optional) https://issues.redhat.com/link.to.spike

- Engineering detail 1

- Engineering detail 2

![]() This requires/does not require a design proposal.

This requires/does not require a design proposal.

![]() This requires/does not require a feature gate.

This requires/does not require a feature gate.

Feature Overview

The AWS-specific code added in OCPPLAN-6006 needs to become GA and with this we want to introduce a couple of Day2 improvements.

Currently the AWS tags are defined and applied at installation time only and saved in the infrastructure CRD's status field for further operator use, which in turn just add the tags during creation.

Saving in the status field means it's not included in Velero backups, which is a crucial feature for customers and Day2.

Thus the status.resourceTags field should be deprecated in favour of a newly created spec.resourceTags with the same content. The installer should only populate the spec, consumers of the infrastructure CRD must favour the spec over the status definition if both are supplied, otherwise the status should be honored and a warning shall be issued.

Being part of the spec, the behaviour should also tag existing resources that do not have the tags yet and once the tags in the infrastructure CRD are changed all the AWS resources should be updated accordingly.

On AWS this can be done without re-creating any resources (the behaviour is basically an upsert by tag key) and is possible without service interruption as it is a metadata operation.

Tag deletes continue to be out of scope, as the customer can still have custom tags applied to the resources that we do not want to delete.

Due to the ongoing intree/out of tree split on the cloud and CSI providers, this should not apply to clusters with intree providers (!= "external").

Once confident we have all components updated, we should introduce an end2end test that makes sure we never create resources that are untagged.

After that, we can remove the experimental flag and make this a GA feature.

Goals

- Inclusion in the cluster backups

- Flexibility of changing tags during cluster lifetime, without recreating the whole cluster

Requirements

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|---|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

List any affected packages or components.

- Installer

- Cluster Infrastructure

- Storage

- Node

- NetworkEdge

- Internal Registry

- CCO

RFE-1101 described user defined tags for AWS resources provisioned by an OCP cluster. Currently user can define tags which are added to the resources during creation. These tags cannot be updated subsequently. The propagation of the tags is controlled using experimental flag. Before this feature goes GA we should define and implement a mechanism to exclude any experimental flags. Day2 operations and deletion of tags is not in the scope.

RFE-2012 aims to make the user-defined resource tags feature GA. This means that user defined tags should be updatable.

Currently the user-defined tags during install are passed directly as parameters of the Machine and Machineset resources for the master and worker. As a result these tags cannot be updated by consulting the Infrastructure resource of the cluster where the user defined tags are written.

The MCO should be changed such that during provisioning the MCO looks up the values of the tags in the Infrastructure resource and adds the tags during creation of the EC2 resources. The MCO should also watch the infrastructure resource for changes and when the resource tags are updated it should update the tags on the EC2 instances without restarts.

Acceptance Criteria:

e2e test where the ResourceTags are updated and then the test verifies that the tags on the ec2 instances are updated without restarts.now moved toCFE-179

Feature Overview

Customers are asking for improvements to the upgrade experience (both over-the-air and disconnected). This is a feature tracking epics required to get that work done.

Goals

- Have an option to do upgrades in more discrete steps under admin control. Specifically, these steps are:

- Control plane upgrade

- Worker nodes upgrade

- Workload enabling upgrade (i..e. Router, other components) or infra nodes

- Better visibility into any errors during the upgrades and documentation of what they error means and how to recover.

- An user experience around an end-2-end back-up and restore after a failed upgrade

OTA-810- Better Documentation:- Backup procedures before upgrades.

- More control over worker upgrades (with tagged pools between user Vs admin)

- The kinds of pre-upgrade tests that are run, the errors that are flagged and what they mean and how to address them.

- Better explanation of each discrete step in upgrades, and what each CVO Operator is doing and potential errors, troubleshooting and mitigating actions.

References

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

<--- Cut-n-Paste the entire contents of this description into your new Epic --->

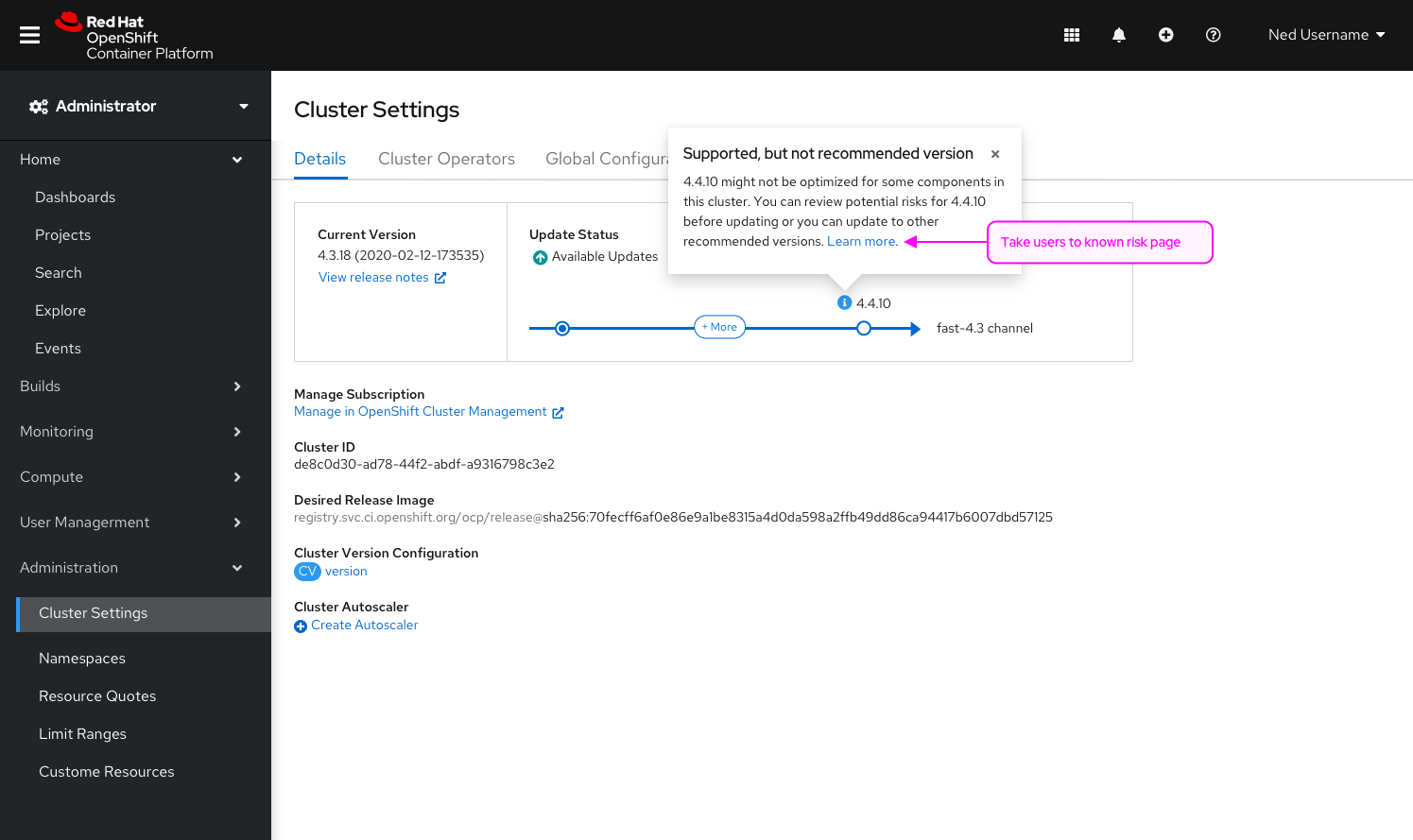

Epic Goal

- Provide a one click option to perform an upgrade which pauses all non master pools

Why is this important?

- Customers are increasingly asking that the overall upgrade is broken up into more digestible pieces

- This is the limit of what's possible today

- R&D work will be done in the future to allow for further bucketing of upgrades into Control Plane, Worker Nodes, and Workload Enabling components (ie: router) That will however take much more consideration and rearchitecting

Scenarios

- An admin selecting their upgrade is offered two options "Upgrade Cluster" and "Upgrade Control Plane"

-

- If the admin selects Upgrade Cluster they get the pre 4.10 behavior

- If the admin selects Upgrade Control Plane all non master pools are paused and an upgrade is initiated

- A tooltip should clarify what the difference between the two are

- The pool progress bars should indicate pause/unpaused status, non master pools should allow for unpausing

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Dependencies (internal and external)

- ...

Previous Work (Optional):

- While this epic doesn't specifically target upgrading from 4.N to 4.N+1 to 4.N+2 with non master pools paused it would fundamentally enable that and it would simplify the UX described in Paused Worker Pool Upgrades

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

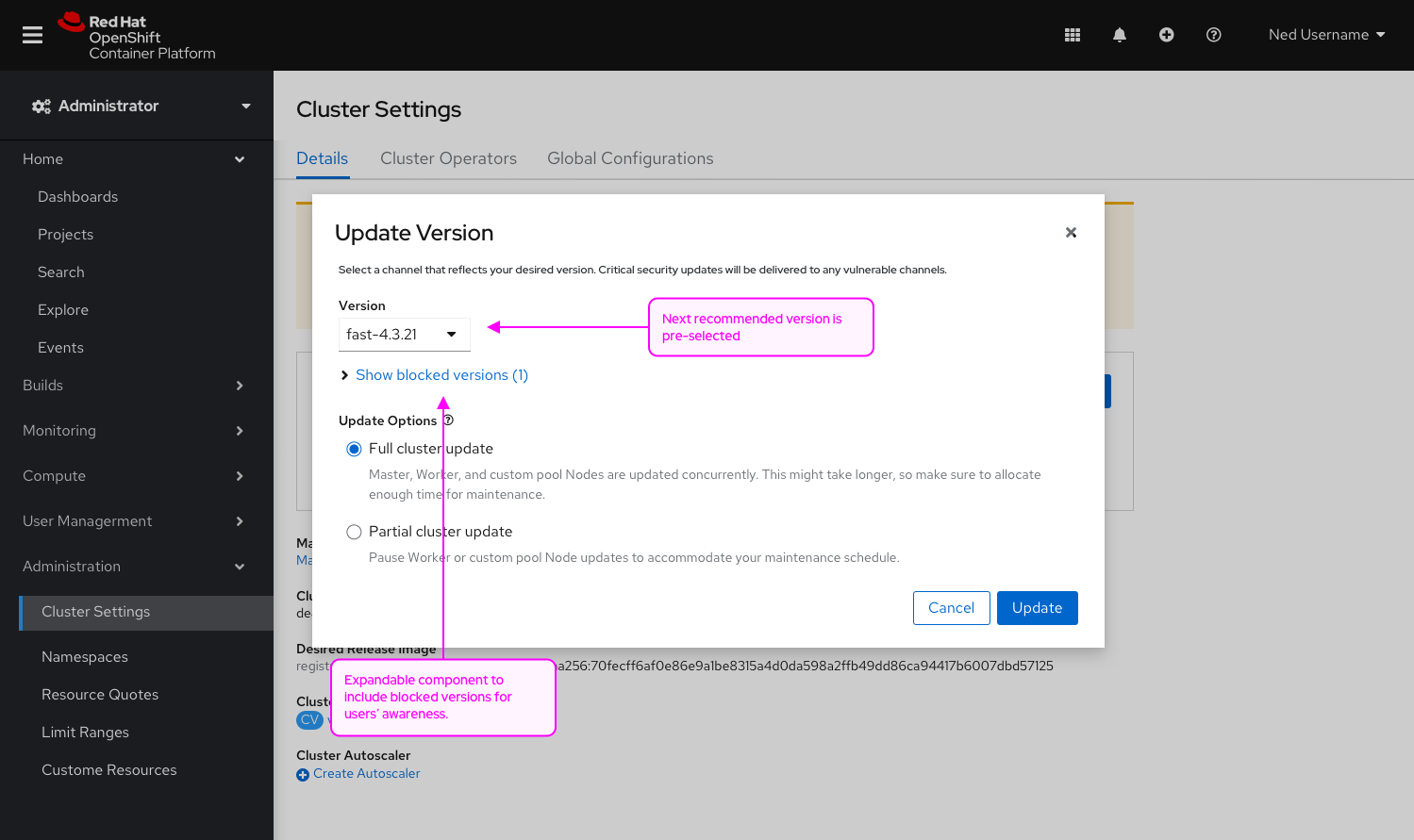

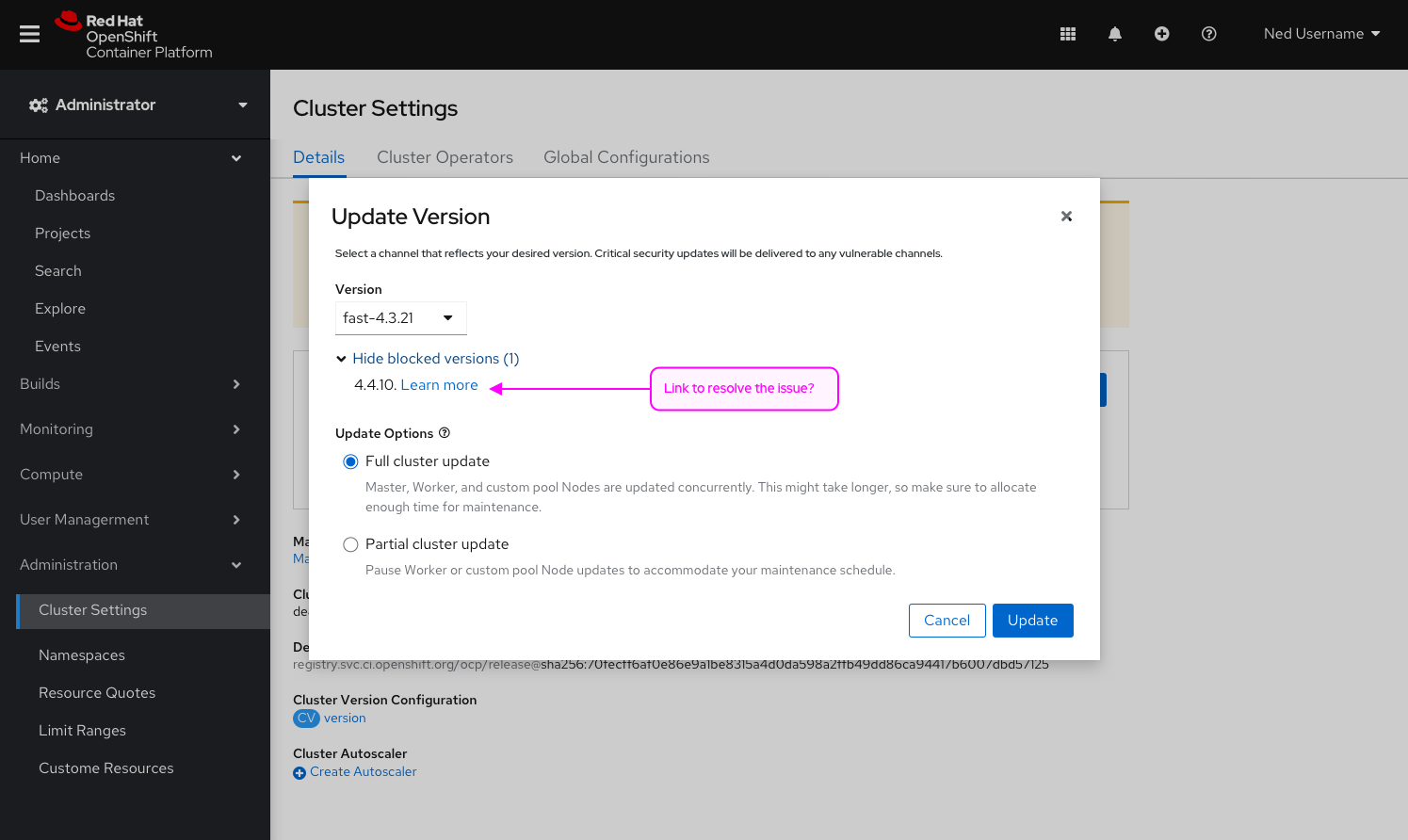

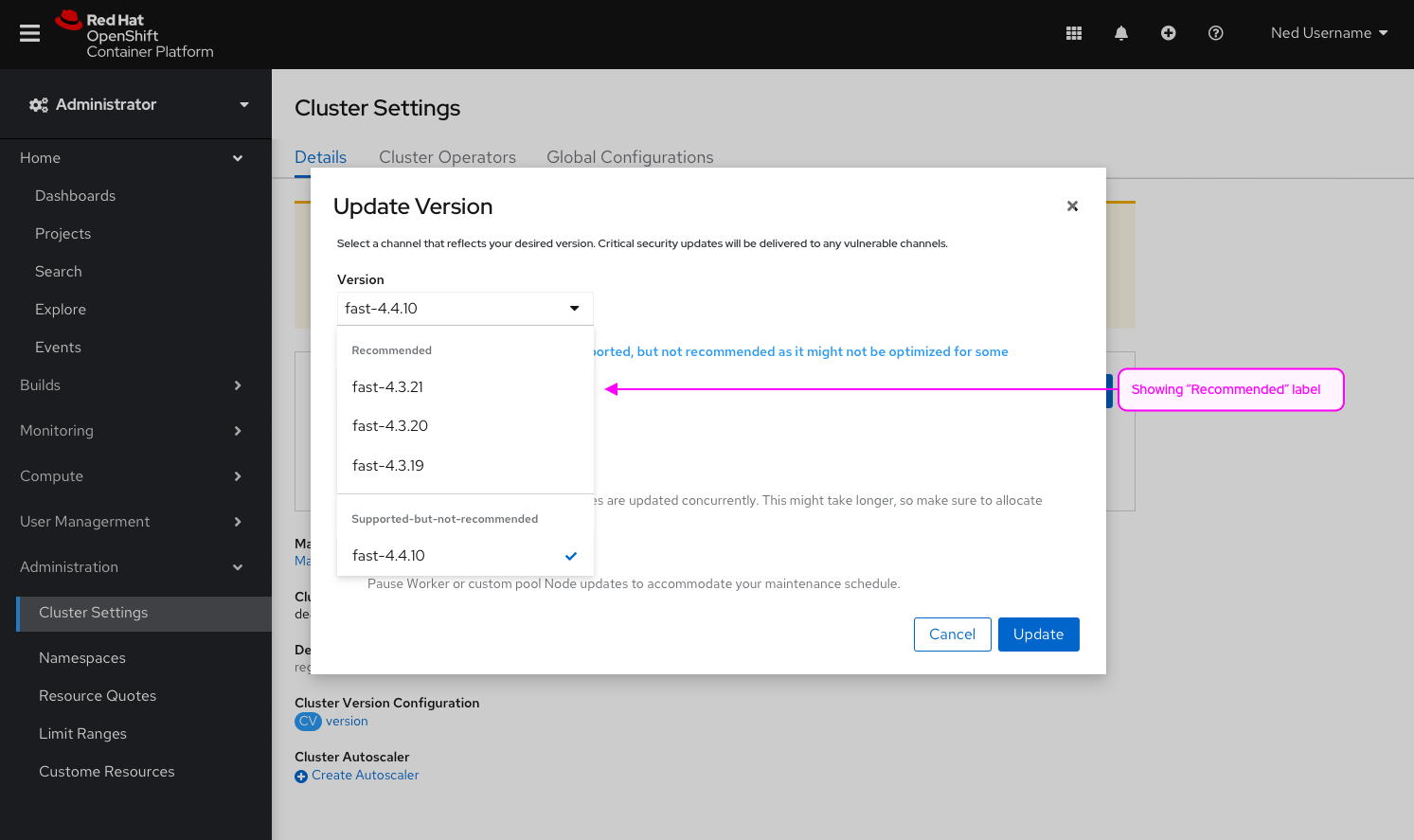

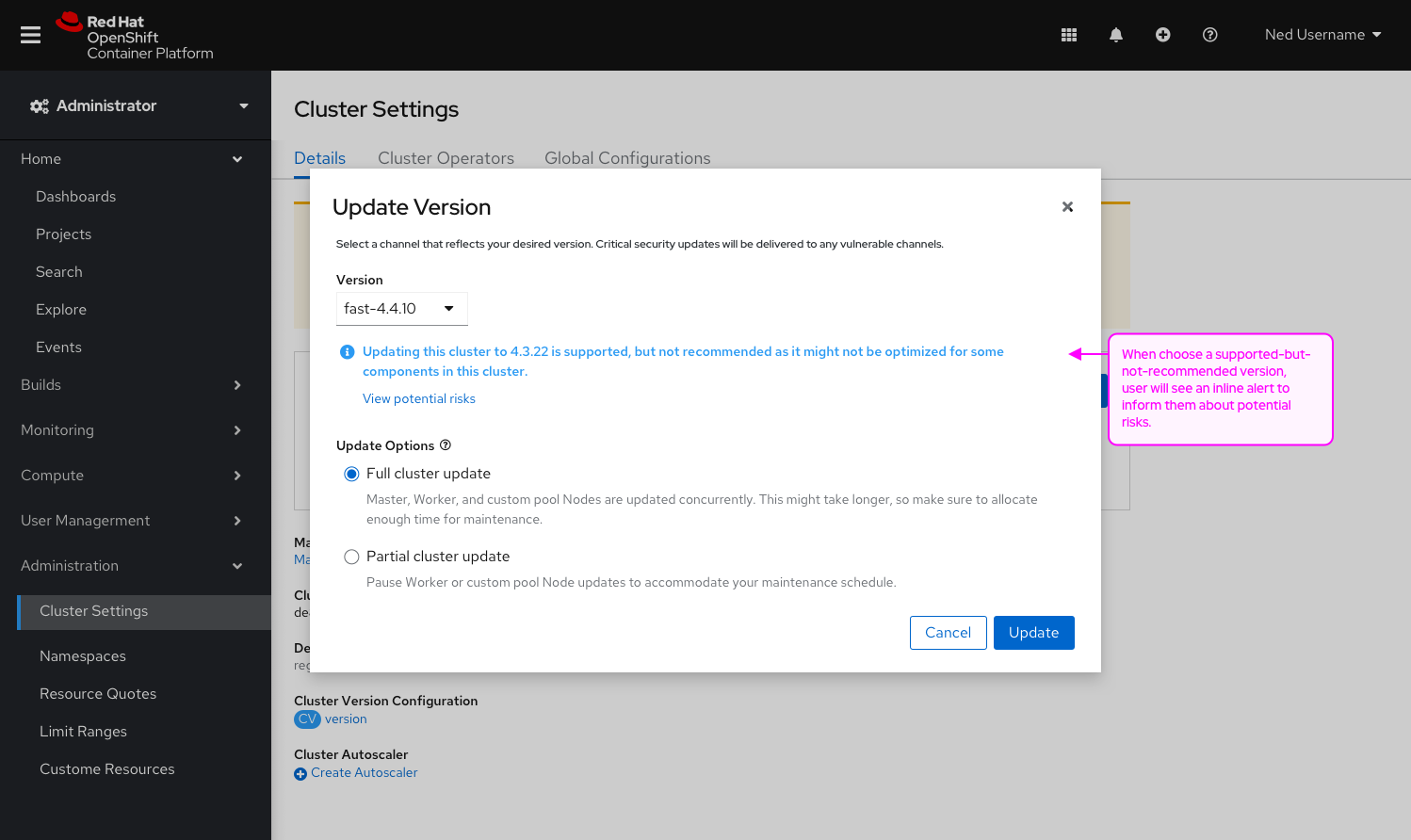

Goal

Add the ability to choose between a full cluster upgrade (which exists today) or control plane upgrade (which will pause all worker pools) in the console.

Background

Currently in the console, users only have the ability to complete a full cluster upgrade. For many customers, upgrades take longer than what their maintenance window allows. Users need the ability to upgrade the control plane independently of the other worker nodes.

Ex. Upgrades of huge clusters may take too long so admins may do the control plane this weekend, worker-pool-A next weekend, worker-pool-B the weekend after, etc. It is all at a pool level, they will not be able to choose specific hosts.

Requirements

- Changes to the Update modal:

- Add the ability to choose between a cluster upgrade and a control plane upgrade (the design does not default to a selection but rather disables the update button to force the user to make a conscious decision)

- link out to documentation to learn more about update strategies

- Changes to the in progress check list:

- Add a status above the worker pool section to let users know that all worker pools are paused and an action to resume all updates

- Add a "resume update" button for each worker pool entry

- Changes to the update status:

- When all master pools are updated successfully, change the status from what we have today "Up to date" to something like "Control plane up to date - all worker pools paused"

- Add an inline alert that lets users know there is a 60 day window to update all worker pools. In the alert, include the sentiment that worker pools can remain paused as long as is normally safe, which means until certificate rotation becomes critical which is at about 60 days. The admin would be advised to unpause them in order to complete the full upgrade. If the MCPs are paused, the certification rotation does not happen, which causes the cluster to become degraded and causes failure in multiple 'oc' commands, including but not limited to 'oc debug', 'oc logs', 'oc exec' and 'oc attach'. (Are we missing anything else here?) Inline alert logic:

- From day 60 to day 10 use the default alert.

- From day 10 to day 3 use the warning alert.

- From day 3 to 0 use the critical alert and continue to persist until resolved.

Design deliverables:

Goal

Improve the UX on the machine config pool page to reflect the new enhancements on the cluster settings that allows users to select the ability to update the control plane only.

Background

Currently in the console, users only have the ability to complete a full cluster upgrade. For many customers, upgrades take longer than what their maintenance window allows. Users need the ability to upgrade the control plane independently of the other worker nodes.

Ex. Upgrades of huge clusters may take too long so admins may do the control plane this weekend, worker-pool-A next weekend, worker-pool-B the weekend after, etc. It is all at a pool level, they will not be able to choose specific hosts.

Requirements

- Changes to the table:

- Remove "Updated, updating and paused" columns. We could also consider adding column management to this table and hide those columns by default.

- Add "Update status" as a column, and surface the same status on cluster settings. Not true or false values but instead updating, paused, and up to date.

- Surface the update action in the table row.

- Add an inline alert that lets users know there is a 60 day window to update all worker pools. In the alert, include the sentiment that worker pools can remain paused as long as is normally safe, which means until certificate rotation becomes critical which is at about 60 days. The admin would be advised to unpause them in order to complete the full upgrade. If the MCPs are paused, the certification rotation does not happen, which causes the cluster to become degraded and causes failure in multiple 'oc' commands, including but not limited to 'oc debug', 'oc logs', 'oc exec' and 'oc attach'. (Are we missing anything else here?) Add the same alert logic to this page as the cluster settings:

- From day 60 to day 10 use the default inline alert.

- From day 10 to day 3 use the warning inline alert.

- From day 3 to 0 use the critical alert and continue to persist until resolved.

Design deliverables:

OCP/Telco Definition of Done

Feature Template descriptions and documentation.

Feature Overview

- Connect OpenShift workloads to Google services with Google Workload Identity

Enable customers to access Google services from workloads on OpenShift clusters using Google Workload Identity (aka WIF)

https://cloud.google.com/kubernetes-engine/docs/concepts/workload-identity

Goals

- Customers want to be able to manage and operate OpenShift on Google Cloud Platform with workload identity, much like they do with AWS + STS or Azure + workload identity.

- Customers want to be able to manage and operate operators and customer workloads on top of OCP on GCP with workload identity.

Requirements

- Add support to CCO for the Installation and Upgrade using both UPI and IPI methods with GCP workload identity.

- Support install and upgrades for connected and disconnected/restriction environments.

- Support the use of Operators with GCP workload identity with minimal friction.

- Support for HyperShift and non-HyperShift clusters.

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|---|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

(Optional) Use Cases

This Section:

- Main success scenarios - high-level user stories

- Alternate flow/scenarios - high-level user stories

- ...

Questions to answer…

- ...

Out of Scope

- …

Background, and strategic fit

This Section: What does the person writing code, testing, documenting need to know? What context can be provided to frame this feature.

Assumptions

- ...

Customer Considerations

- ...

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

Epic Goal

- Complete the implementation for GCP workload identity, including support and documentation.

Why is this important?

- Many customers want to follow best security practices for handling credentials.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

Dependencies (internal and external)

Open questions:

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

We need to ensure following things in the openshift operators

1) Make sure to operator uses v0.0.0-20210218202405-ba52d332ba99 or later version of the golang.org/x/oauth2 module

2) Mount the oidc token in the operator pod, this needs to go in the deployment. We have done it for cluster-image-registry-operator here

3) For workload identity to work, gco credentials that the operator pod uses should be of external_account type (not service_account). The external_account credentials type have path to oidc token along, url of the service account to impersonate along with other details. These type of credentials can be generated from gcp console or programmatically (supported by ccoctl). The operator pod can then consume it from a kube secret. Make appropriate code changes to the operators so that can consume these new credentials

Following repos need one or more of above changes

- https://github.com/openshift/cloud-credential-operator

- https://github.com/openshift/cluster-image-registry-operator

- https://github.com/openshift/cluster-ingress-operator

- https://github.com/openshift/cluster-storage-operator

- https://github.com/openshift/cluster-api-provider-gcp

- https://github.com/openshift/gcp-pd-csi-driver

- https://github.com/openshift/machine-api-operator

- https://github.com/openshift/gcp-pd-csi-driver-operator

- https://github.com/openshift/image-registry

- https://github.com/openshift/docker-distribution

Feature Overview

Enable sharing ConfigMap and Secret across namespaces

Requirements

| Requirement | Notes | isMvp? |

|---|---|---|

| Secrets and ConfigMaps can get shared across namespaces | YES |

Questions to answer…

NA

Out of Scope

NA

Background, and strategic fit

Consumption of RHEL entitlements has been a challenge on OCP 4 since it moved to a cluster-based entitlement model compared to the node-based (RHEL subscription manager) entitlement mode. In order to provide a sufficiently similar experience to OCP 3, the entitlement certificates that are made available on the cluster (OCPBU-93) should be shared across namespaces in order to prevent the need for cluster admin to copy these entitlements in each namespace which leads to additional operational challenges for updating and refreshing them.

Documentation Considerations

Questions to be addressed:

* What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

* Does this feature have doc impact?

* New Content, Updates to existing content, Release Note, or No Doc Impact

* If unsure and no Technical Writer is available, please contact Content Strategy.

* What concepts do customers need to understand to be successful in [action]?

* How do we expect customers will use the feature? For what purpose(s)?

* What reference material might a customer want/need to complete [action]?

* Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

* What is the doc impact (New Content, Updates to existing content, or Release Note)?

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

<--- Cut-n-Paste the entire contents of this description into your new Epic --->

Epic Goal

- Deliver the Projected Resources CSI driver via the OpenShift Payload

Why is this important?

- Projected resource shares will be a core feature of OpenShift. The share and CSI driver have multiple use cases that are important to users and cluster administrators.

- The use of projected resources will be critical to distributing Simple Content Access (SCA) certificates to workloads, such as Deployments, DaemonSets, and OpenShift Builds.

Scenarios

As a developer using OpenShift

I want to mount a Simple Content Access certificate into my build

So that I can access RHEL content within a Docker strategy build.

As a application developer or administrator

I want to share credentials across namespaces

So that I don't need to copy credentials to every workspace

Acceptance Criteria

- OCP conformance suite must ensure that the projected resource CSI driver is installed on every OpenShift deployment.

- OCP build suite tests that projected resource CSI driver volumes can be added to builds. Only if builds support inline CSI volumes.

- Release Technical Enablement - Docs and demos on how to create a Projected Resource share and add it as a volume to workloads. A special use case for adding RHEL entitlements to builds should be included.

Dependencies (internal and external)

- ...

Previous Work (Optional):

- …

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

User Story

As a cluster admin

I want the cluster storage operator to install the shared resources CSI driver

So that I can test the shared resources CSI driver on my cluster

Acceptance Criteria

- Cluster storage operator uses image references to resolve the csi-driver-shared-resource-operator and all images needed to deploy the csi driver.

- Shared resources CSI driver is installed when the cluster enables the CSIDriverSharedResources feature gate, OR

- Shared resource CSI driver is installed when the cluster enables the TechPreviewNoUpgrade feature set

- CI ensures that if the TechPreviewNoUpgrade feature set is enabled on the cluster, the shared resource CSI driver is deployed and functions correctly.

Docs Impact

Docs will need to identify how to install the shared resources CSI driver (by enabling the tech preview feature set)

Notes

Tasks:

- Add the Share APIs (SharedSecret, SharedConfigMap) to openshift/api

- Generate clients in openshift/client-go for Share APIs

- Update the CSI driver name used in the enum for the ClusterCSIDriver custom resource.

- Generate custom resource definitions and include it in the deployment YAMLs for the shared resource operator

- Add YAML deployment manifests for the shared resource operator to the cluster storage operator (include necessary RBAC)

- Ensure cluster storage operator has permission to create custom resource definitions

- Enhance the cluster storage operator to install the shared resource CSI driver only when the cluster enables the CSIDriverSharedResources feature gate

Note that to be able to test all of this on any cloud provider, we need STOR-616 to be implemented. We can work around this by making the CSI driver installable on AWS or GCP for testing purposes.

The cluster storage operator has cluster-admin permissions. However, no other CSI driver managed by the operator includes a CRD for its API.

User Story

As an OpenShift engineer

I want to know which clusters are using the Shared Resource CSI Driver

So that I can be proactive in supporting customers who are using this tech preview feature

Acceptance Criteria

- Key metrics for the shared resource CSI driver are exported to Telemeter via the cluster monitoring operator.

Docs Impact

None - metrics exported to telemetry are not formally documented.

QE Impact